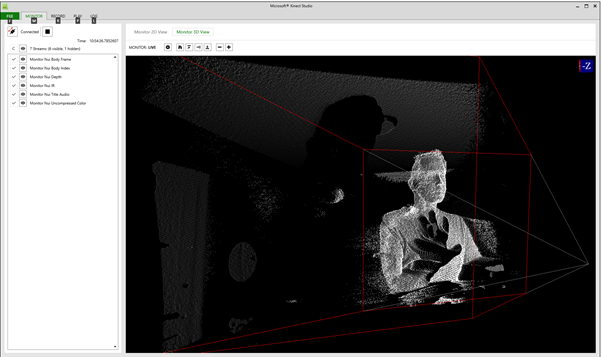

com/Microsoft/Azure-Kinect-Sensor-SDK depth_image_to_point_cloud() Organized 3D Point Clouds¶ We demonstrate Polylidar3D being applied to an organized point cloud.

In order to convert the depth images into 3D point clouds, you need to use one of the following set of instructions, depending on which dataset you downloaded: 1. As you can see, there is still a large void. It is an open-source library that allows the use of a set of efficient data structures and algorithms for 3D data processing. This example is taken from the much more thorough script titled realsense_mesh. Posted: (5 days ago) Clear all elements in the geometry. I would like to make the point clouds in world coordinates.

#Kinect sdk download#

m' link to launch its download in your browser. open3d compute distance between mesh and point cloud. Open3D provides a convenient visualization function draw_geometries which takes a list of geometry objects ( PointCloud, TriangleMesh, or Image ), and renders them together.

while the primary focus is on object detection in digital images from cameras and videos, this tutorial will also introduce object detection in 3d point clouds. xyz_image must have a stride in bytes of at least 6 times its width in pixels. While labeling, labelCloud develops 3D bounding boxes over point clouds. I think I need metadata information from the pointcloud such as the width and height of the image. For each point in the point cloud I calculate the u,v coordinates in the target image and the depth value. 10” is open3d using the power of gpu on mac? live camera feed from normal camera in open3d open3d. In the top right corner, click the small save icon (“Export 3D model to PLY format”). But, when the sample app rotates the point cloud slightly, the 3D shape of the point cloud becomes apparent to the user. Where do I find the camera intrinsic parameters on the R200 to go about doing this? Also, if possible, how would I align the depth image to match the RGB image? Thanks I use ROS Melodic on Ubuntu18. A RawDepthImage is designed to contain unsigned short images (usually depth image in millimeters) while a DepthImage is thought to contain float images (usually depth images in meters). To tackle the perception challenges posed by transparent objects, we propose TranspareNet, a joint point cloud and depth completion method, with the ability to complete the depth of. Hi, I am trying to insert points from a point cloud into an open3d octree.The camera can thereby be the depth or color camera.

declares objects to store depth images and point clouds. This combines the depth data with the captured color information to generate a colored 3D point cloud.Each bounding box is defined with 10 parameters in labelCloud: one for the object class and rand ( 100, 3 ) # Make PolyData point_cloud = pv. Any thoughts on how this can be done? I looked at using Example 1. I'm trying to generate an Open3D point cloud from a depth image rendered in MuJoCo.The first 5 images I posted are the individual point clouds spaced apart by 72*.Draco is a library for compressing and decompressing 3D geometric meshes and point clouds. cpp:48] Looking for USB connected devices I0327 09:14:02. Open3d depth image to point cloud That's slow and generates a relatively high CPU load.